Azure compute is an on-demand computing service for running cloud-based applications. It provides computing resources like multi-core processors and supercomputers via virtual machines and containers. It also provides serverless computing to run apps without requiring infrastructure setup or configuration. The resources are available on-demand and can typically be created in minutes or even seconds. There are four common techniques for performing compute in Azure:

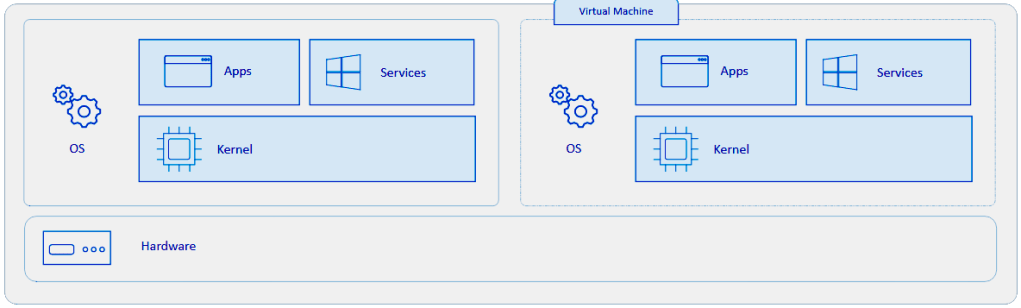

- Virtual machines, or VMs, are software emulations of physical computers. They include a virtual processor, memory, storage, and networking resources. They host an operating system (OS), and you’re able to install and run software just like a physical computer. And by using a remote desktop client, you can use and control the virtual machine as if you were sitting in front of it.

- Containers are a virtualization environment for running applications. Just like virtual machines, containers run on top of a host operating system. But unlike VMs, containers don’t include an operating system for the apps running inside the container. Instead, containers bundle the libraries and components needed to run the application and use the existing host OS running the container. For example, if five containers are running on a server with a specific Linux kernel, all five containers and the apps within them share that same Linux kernel.

- Azure App Service is a platform-as-a-service (PaaS) offering in Azure that is designed to host enterprise-grade web-oriented applications. You can meet rigorous performance, scalability, security, and compliance requirements while using a fully managed platform to perform infrastructure maintenance.

- Serverless computing is a cloud-hosted execution environment that runs your code but completely abstracts the underlying hosting environment. You create an instance of the service, and you add your code; no infrastructure configuration or maintenance is required, or even allowed.

See also Azure Compute Decision Tree

Virtual Machines

VMs run a complete operating system–including its own kernel–as shown in this diagram.

Azure supports following types of Virtual Machines:

Azure Features for Virtual Machines:

- Availability sets – logical grouping of two or more VMs that help keep your application available during planned or unplanned maintenance.

- Virtual Machine Scale Sets let you create and manage a group of identical, load balanced VMs. Imagine you’re running a website that enables scientists to upload astronomy images that need to be processed. If you duplicated the VM, you’d normally need to configure an additional service to route requests between multiple instances of the website. Virtual Machine Scale Sets could do that work for you.

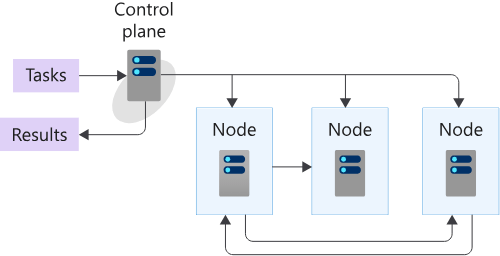

- Azure Batch enables large-scale job scheduling and compute management with the ability to scale to tens, hundreds, or thousands of VMs. Batch has several components that act together. An Azure Batch Account forms a container for all of the main Batch elements. Within the Batch account, you typically create Batch pools of VMs, which are often called nodes, running either Windows or Linux. You set up Batch jobs that work like logical containers with configurable settings for the real unit of work in Batch, known as Batch tasks. Batch is well-suited to highly parallel workloads, which are sometimes called embarrassingly parallel workloads.

- HPC Pack – series of installers for Windows that allows to configure own control and management plane, and highly flexible deployments of on-premises and cloud nodes.

Azure Virtual Desktop

Azure Virtual Desktop is a desktop and application virtualization service that runs on the cloud. It enables usage of a cloud-hosted version of Windows from any location. Azure Virtual Desktop works across devices and operating systems and works with apps for accessing remote desktops or most modern browsers.

Azure Virtual Desktop lets you use Windows 10 or Windows 11 Enterprise multi-session, the only Windows client-based operating system that enables multiple concurrent users on a single VM. Allows to reduce costs using eligible licenses with a modern virtual desktop infrastructure (VDI).

Containers

Azure Container Instances (ACI)

Azure Container Instances (ACI) offers the fastest and simplest way to run a container in Azure. You don’t have to manage any virtual machines or configure any additional services. It is a PaaS offering that allows you to upload your containers and execute them directly with automatic elastic scale.

Azure Container Instances is useful for scenarios that can operate in isolated containers, including simple applications, task automation, and build jobs. Here are some of the benefits:

- Fast startup: Launch containers in seconds.

- Per second billing: Incur costs only while the container is running.

- Hypervisor-level security: Isolate your application as completely as it would be in a VM.

- Custom sizes: Specify exact values for CPU cores and memory.

- Persistent storage: Mount Azure Files shares directly to a container to retrieve and persist state.

- Linux and Windows: Schedule both Windows and Linux containers using the same API.

- Confidential containers on Azure Container Instances enable customers to run Linux containers within a hardware-based and attested Trusted Execution Environment (TEE), remote attestation.

Azure Kubernetes Service (AKS)

Azure Kubernetes Service (AKS) is a complete orchestration service for containers with distributed architectures with multiple containers.

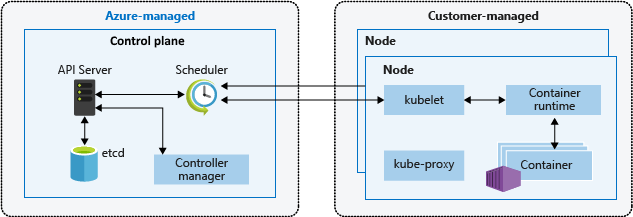

A Kubernetes cluster is divided into two components:

- Control plane: provides the core Kubernetes services and orchestration of application workloads.

- Nodes: run your application workloads.

The following services make up the control plane for a Kubernetes cluster:

- API server

- Backing store

- Scheduler

- Controller manager

- Cloud controller manager

The following services run on the Kubernetes node:

- Kubelet

- Kube-proxy

- Container runtime

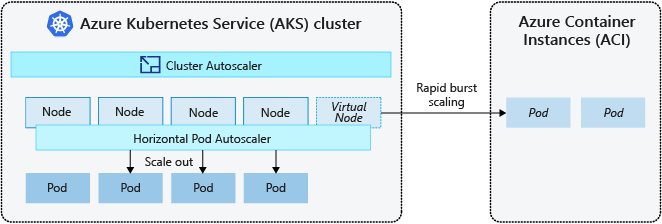

Kubernetes uses the horizontal pod autoscaler (HPA) to monitor the resource demand and automatically scale the number of replicas. By default, the horizontal pod autoscaler checks the Metrics API every 30 seconds for any required changes in replica count. When changes are required, the number of replicas is increased or decreased accordingly. To respond to changing pod demands, Kubernetes has a cluster autoscaler, that adjusts the number of nodes based on the requested compute resources in the node pool. By default, the cluster autoscaler checks the Metrics API server every 10 seconds for any required changes in node count. To rapidly scale your AKS cluster, you can integrate with Azure Container Instances (ACI).

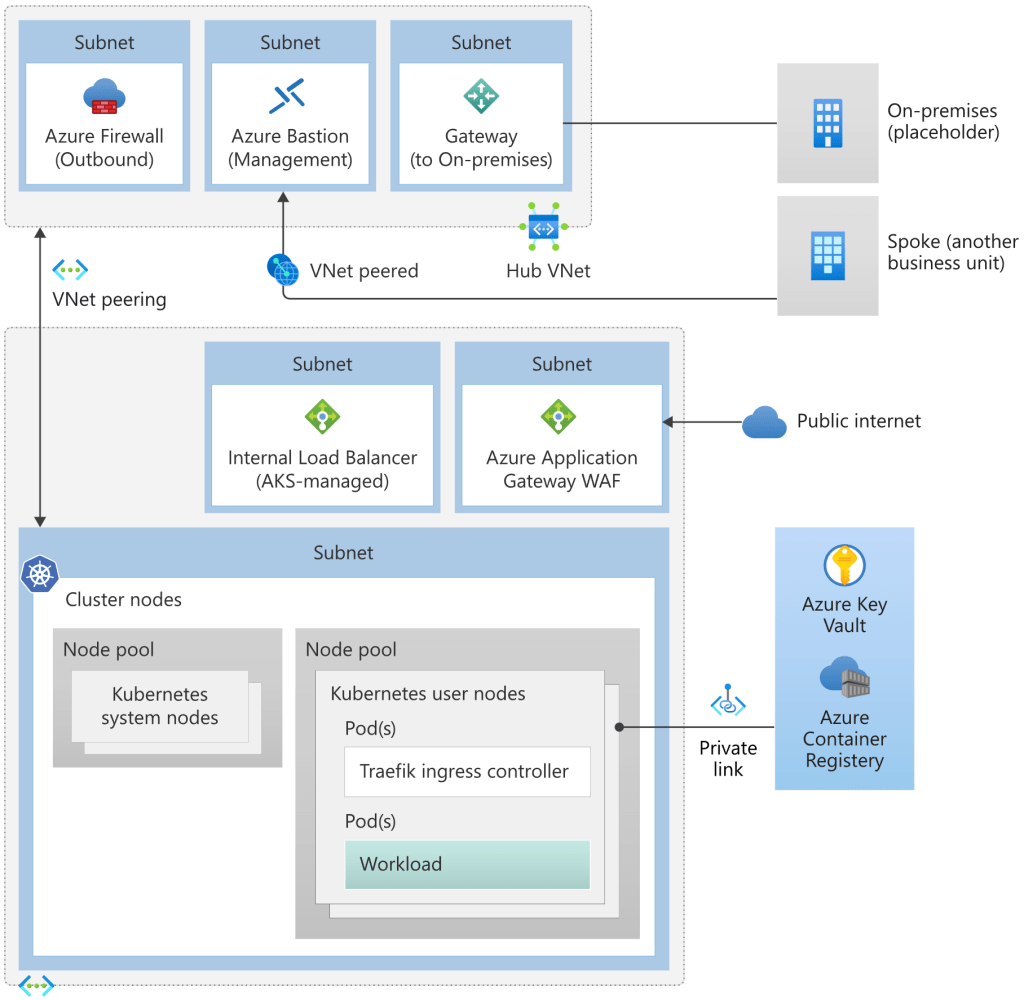

AKS reference architecture with hub-spoke network topology:

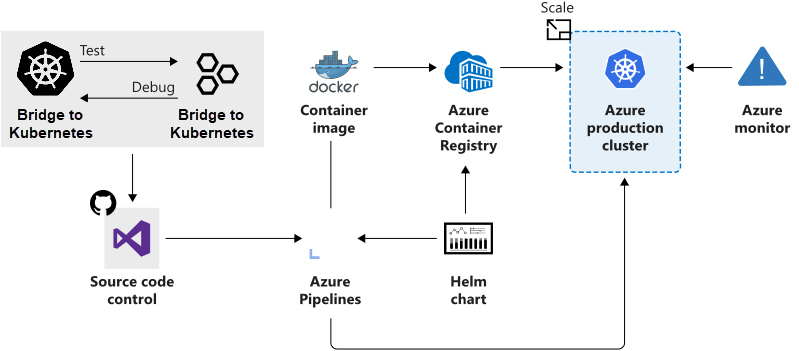

AKS supports the Docker image format so it is possible to use any development environment to create a workload, package the workload as a container and deploy the container as a Kubernetes pod. Standard Kubernetes command-line tools or the Azure CLI can be used to manage deployments. AKS also supports all the popular development and management tools such as Helm, Draft, Kubernetes extension for Visual Studio Code and Visual Studio Kubernetes Tools.

Advantages of AKS:

- Consistent management model for a multi-container environment that uses shared compute, networking, and storage resources

- Declarative deployment and management model

- Self-healing of pods

- Autoscaling of pods

- Autoscaling of autocluster nodes in virtualized scenarios

- Automated rolling update and rollback of pod deployments

- Auto-discovery of new pod deployments

- Load balancing across pods running the same workloads

Azure App Service

With Azure App Service, you can host most common app service styles, including:

- Web Apps. App Service includes full support for hosting web apps using ASP.NET, ASP.NET Core, Java, Ruby, Node.js, PHP, or Python. You can choose either Windows or Linux as the host operating system.

- API Apps. Much like hosting a website, you can build REST-based Web APIs using your choice of language and framework. You get full Swagger support, and the ability to package and publish your API in the Azure Marketplace. The produced apps can be consumed from any HTTP(S)-based client.

- WebJobs allows you to run a program (.exe, Java, PHP, Python, or Node.js) or script (.cmd, .bat, PowerShell, or Bash) in the same context as a web app, API app, or mobile app. They can be scheduled, or run by a trigger. WebJobs are often used to run background tasks as part of your application logic.

- Mobile Apps. Use the Mobile Apps feature of Azure App Service to quickly build a back-end for iOS and Android apps.

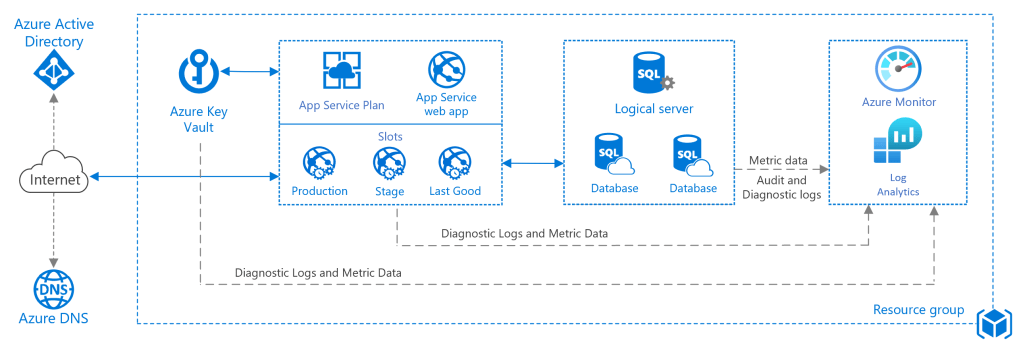

Reference architecture for basic web application:

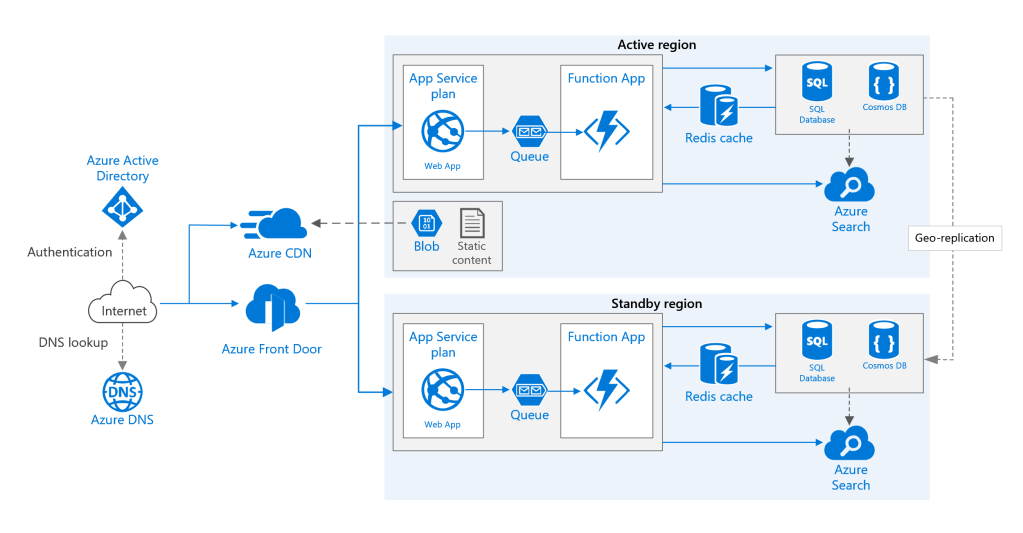

Reference architecture for highly available multi-region web application:

Serverless computing

Serverless computing is the abstraction of servers, infrastructure, and OSs. With serverless computing, Azure takes care of managing the server infrastructure and allocation/deallocation of resources based on demand. Infrastructure isn’t your responsibility. Scaling and performance are handled automatically, and you are billed only for the exact resources you use. There’s no need to even reserve capacity.

- Azure Functions scale automatically based on demand, so they’re a solid choice when demand is variable. With functions, Azure runs your code when it’s triggered and automatically deallocates resources when the function is finished. In this model, you’re only charged for the CPU time used while your function runs. Furthermore, Azure Functions can be either stateless (the default), where they behave as if they’re restarted every time they respond to an event, or stateful (called “Durable Functions”), where a context is passed through the function to track prior activity. Key features: code-first, built-in binding types, multiple programming languages.

- Azure Logic Apps execute workflows designed to automate business scenarios and built from predefined logic blocks. Every logic app workflow starts with a trigger, which fires when a specific event happens or when newly available data meets specific criteria. Many triggers include basic scheduling capabilities, so developers can specify how regularly their workloads will run. Each time the trigger fires, the Logic Apps engine creates a logic app instance that runs the actions in the workflow. These actions can also include data conversions and flow controls, such as conditional statements, switch statements, loops, and branching. Key features: design-first, collection of connectors, intuitive monitoring.

- Azure Event Grid is a serverless event broker which efficiently and reliably routes events from Azure and non-Azure resources and distributes the events to registered subscriber endpoints. Source of the events can be other applications, SaaS services and Azure services. Publishers emit events but have no expectation about how the events are handled. Subscribers decide on which events they want to handle.

- Azure Service Bus is a fully managed enterprise integration message broker. Service Bus can decouple applications and services. Data is transferred between different applications and services using messages. The data can be any kind of information, including structured data encoded with the common formats such as the following ones: JSON, XML, Apache Avro, Plain Text.

Application Gateways

Here are some options for implementing an API gateways.

- Reverse proxy server. Nginx and HAProxy are popular reverse proxy servers that support features such as load balancing, SSL, and layer 7 routing. They are both free, open-source products, with paid editions that provide additional features and support options. Nginx and HAProxy are both mature products with rich feature sets and high performance. You can extend them with third-party modules or by writing custom scripts in Lua. Nginx also supports a JavaScript-based scripting module referred to as NGINX JavaScript. This module was formally named nginScript.

- Service mesh ingress controller. If you are using a service mesh such as Linkerd or Istio, consider the features that are provided by the ingress controller for that service mesh. For example, the Istio ingress controller supports layer 7 routing, HTTP redirects, retries, and other features.

- Azure Application Gateway. Application Gateway is a managed load balancing service that can perform layer-7 routing and SSL termination. It also provides a web application firewall (WAF).

- Azure Front Door is Microsoft’s modern cloud Content Delivery Network (CDN) that provides fast, reliable, and secure access between your users and your applications’ static and dynamic web content across the globe. Azure Front Door delivers your content using the Microsoft’s global edge network with hundreds of global and local points of presence (PoPs) distributed around the world close to both your enterprise and consumer end users.

- Azure API Management. API Management is a turnkey solution for publishing APIs to external and internal customers. It provides features that are useful for managing a public-facing API, including rate limiting, IP restrictions, and authentication using Azure Active Directory or other identity providers. API Management doesn’t perform any load balancing, so it should be used in conjunction with a load balancer such as Application Gateway or a reverse proxy. For information about using API Management with Application Gateway, see Integrate API Management in an internal VNet with Application Gateway.

Compute Provisioning

Custom script

- Ease of setup. The custom script extension is built into the Azure portal, so setup is easy.

- Management. The management of custom scripts can get tricky as your infrastructure grows and you accumulate different custom scripts for different resources.

- Interoperability. The custom script extension can be added into an Azure Resource Manager template. You can also deploy it through Azure PowerShell or the Azure CLI.

- Configuration language. You can write scripts by using many types of commands. You can use PowerShell and Bash.

- Limitations and drawbacks. Custom scripts aren’t suitable if your script needs more than one and a half hours to apply your configuration. Avoid using custom scripts for any configuration that needs reboots.

Azure Desired State Configuration extensions

- Ease of setup. Desired State Configurations (DSCs) are easy to read, update, and store. Configurations define what state you want to achieve. The author doesn’t need to know how that state is reached.

- Management. DSC democratizes configuration management across servers.

- Interoperability. DSCs are used with Azure Automation State Configuration. They can be configured through the Azure portal, Azure PowerShell, or Azure Resource Manager templates.

- Configuration language. Use PowerShell to configure DSC.

- Limitations and drawbacks. You can only use PowerShell to define configurations. If you use DSC without Azure Automation State Configuration, you have to take care of your own orchestration and management.

Azure Automation State Configuration

- Ease of setup. Automation State Configuration isn’t difficult to set up, but it requires the user to be familiar with the Azure portal.

- Management. The service manages all of the virtual machines for you automatically. Each virtual machine can send you detailed reports about its state, which you can use to draw insights from this data. Automation State Configuration also helps you to manage your DSC configurations more easily.

- Interoperability. Automation State Configuration requires DSC configurations. It works with your Azure virtual machines automatically, and any virtual machines that you have on-premises or on another cloud provider.

- Configuration language. Use PowerShell.

- Limitations and drawbacks. You can only use PowerShell to define configurations.

Azure Resource Manager templates

- Ease of setup. You can create Resource Manager templates easily. You have many templates available from the GitHub community, which you can use or build upon. Alternatively, you can create your own templates from the Azure portal.

- Management. Managing Resource Manager templates is straightforward because you manage JavaScript Object Notation (JSON) files.

- Interoperability. You can use other tools to provision Resource Manager templates, such as the Azure CLI, the Azure portal, PowerShell, and Terraform.

- Configuration language. Use JSON.

- Limitations and drawbacks. JSON has a strict syntax and grammar, and mistakes can easily render a template invalid. The requirement to know all of the resource providers in Azure and their options can be onerous.

Chef

- Ease of setup. The Chef server runs on the master machine, and Chef clients run as agents on each of your client machines. You can also use hosted Chef and get started much faster, instead of running your own server.

- Management. The management of Chef can be difficult because it uses a Ruby-based domain-specific language. You might need a Ruby developer to manage the configuration.

- Interoperability. Chef server only works under Linux and Unix, but the Chef client can run on Windows.

- Configuration language. Chef uses a Ruby-based domain-specific language.

- Limitations and drawbacks. The language can take time to learn, especially for developers who aren’t familiar with Ruby.

Terraform

- Ease of setup. To get started with Terraform, download the version that corresponds with your operating system and install it.

- Management. Terraform’s configuration files are designed to be easy to manage.

- Interoperability. Terraform supports Azure, Amazon Web Services, and Google Cloud Platform.

- Configuration language. Terraform uses Hashicorp Configuration Language (HCL). You can also use JSON.

- Limitations and drawbacks. Because Terraform is managed separately from Azure, you might find that you can’t provision some types of services or resources.

Azure Quantum (preview)

Azure Quantum (preview in Jun 2023) is the cloud quantum computing service of Azure, with a diverse set of quantum solutions and technologies. Azure Quantum provides development environment to create quantum algorithms for multiple platforms at once while preserving flexibility to tune the same algorithms for specific systems.

Microsoft’s Azure Quantum offers a first-party resource estimation target that computes and outputs wall clock execution time and physical resource estimates for a program, assuming it is executed on a fault-tolerant error-corrected quantum computer. You can choose from pre-defined qubit parameters and quantum error correction schemes and define custom characteristics of the underlying physical qubit model. The resource estimator tool enables quantum innovators to prepare and refine solutions to run on tomorrow’s scaled quantum computers.

Note. Quantum hardware devices are still an emerging technology. These devices have some limitations and requirements for quantum programs that run on them.

Use cases for Azure Quantum:

- Simulate quantum mechanical problems, such as chemical reactions, biological reactions, or material formations.

- Quantum-inspired optimization allows to find the best solution to a problem given its desired outcome and constraints. Sample tasks: vehicle routing, supply chain management, scheduling, portfolio optimization, power grid management.

- Quantum machine learning allows to create quantum algorithms that solve tasks in machine learning, thereby improving and often expediting classical machine learning techniques.

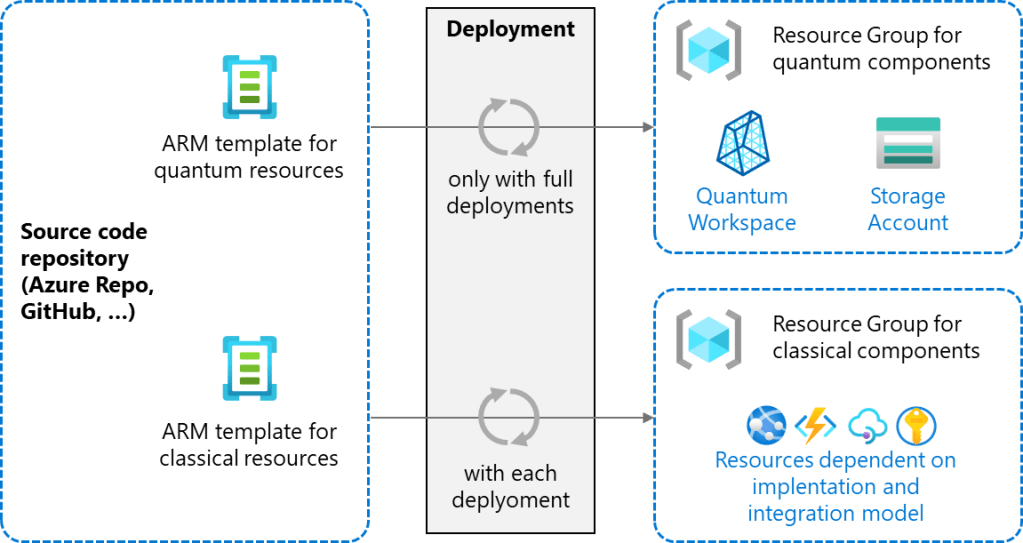

Below is representation of using ARM templates to automatically provision quantum workspaces and the classical environments.

Decision Paths

Selecting Azure service for container needs:

Reference Materials

- AZ-900. Azure Fundamentals: Describe Azure architecture and services

- AZ-305 Module: Design an Azure compute solution

- Choose an Azure compute service

- Choose the right integration and automation services in Azure

- Containers vs. virtual machines

- Batch Documentation

- What is Azure Batch?

- Azure Kubernetes Service (AKS)

- Azure Red Hat OpenShift

- Azure Container Apps

- Azure Functions

- Web App for Containers

- Azure Container Instances

- Azure Service Fabric

- Azure Container Registry

- Azure Architecture Center

- Use API gateways in microservices

- Azure Quantum documentation